Enterprise AI Governance in 2026: A Practical Checklist for Leaders

Enterprise LLM adoption is accelerating—but governance often lags behind. In 2026, leaders need a governance model that does two things at once: keep risk under control and keep teams moving fast.

Enterprise LLM adoption is accelerating—but governance often lags behind. In 2026, leaders need a governance model that does two things at once: keep risk under control and keep teams moving fast.

This guide is a practical checklist you can use to stand up an “AI governance baseline” in weeks—not quarters.

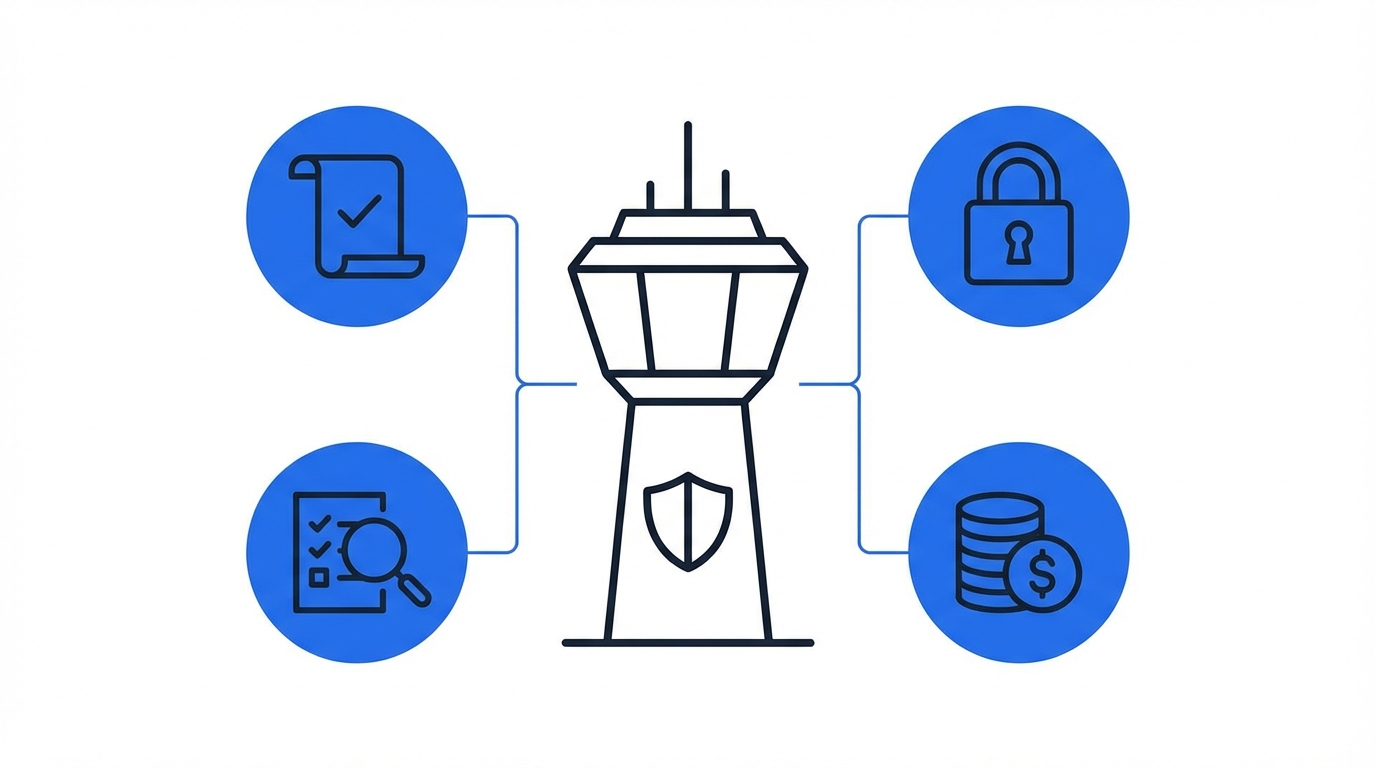

What AI governance should achieve (in plain terms)

A strong baseline answers these questions:

- What data can be used with LLMs—and what is prohibited?

- Who can access which tools, models, and knowledge sources?

- What gets logged (and who reviews it)?

- How do we measure value and control cost?

- Who owns decisions, exceptions, and incident response?

The 2026 governance checklist

1) Data boundary and classification

- Define prohibited data categories (e.g., regulated PII, unreleased financials, legal privileged content).

- Define “allowed with controls” categories (e.g., internal policies, sanitized customer tickets).

- Establish a simple classification scheme employees can actually follow.

- Document where the “system of truth” lives for critical knowledge.

2) Tooling and model policy (approved path)

- Publish an approved list of AI tools (and the business reasons for each).

- Define which workflows must use approved tools (e.g., anything customer-facing).

- Set rules for personal accounts vs enterprise accounts.

- Decide when to use smaller/cheaper models vs premium models (routing guidance).

3) Identity, access, and permissions

- Require SSO where possible for enterprise tools.

- Enforce role-based access (RBAC) for sensitive knowledge sources.

- Ensure access respects existing permissions (no “AI bypass” of ACLs).

- Define offboarding procedures (revoking access, keys, connectors).

4) Auditability and logging

- Confirm you can answer: who used which model, with what inputs, and what outputs were produced?

- Decide retention duration for logs.

- Define review cadence (weekly for early rollout, then monthly).

- Establish escalation rules for suspicious prompts or data leakage.

5) Human-in-the-loop design

- Identify workflows that require approval before sending externally.

- Define who is accountable for final output quality (role/owner).

- Provide templates for common tasks (emails, proposals, summaries).

- Require citations or source links for knowledge-based outputs when possible.

6) Quality and evaluation (Evals)

- Define “quality” per workflow (accuracy, completeness, tone, compliance).

- Build a small evaluation set (10–30 examples) for repeatable testing.

- Add regression checks when prompts/tools change.

- Monitor drift over time (new policies, new products, new data).

7) Cost controls (predictability over minimization)

- Track usage by team/workflow.

- Set budgets with alerts and soft limits.

- Use caching/reuse patterns for repeated queries where appropriate.

- Review ROI quarterly: which workflows deliver measurable impact?

8) Security and legal operating cadence

- Name a cross-functional owner group (security, legal, IT, business).

- Create an intake form for new use cases (lightweight, fast).

- Maintain vendor risk documentation (data handling, retention, training usage).

- Define incident response runbooks (who does what, when).

A simple operating model that works

If you want a minimal structure:

- Central team sets guardrails, templates, logging, and approved tooling.

- Business teams own use cases, adoption, and outcome metrics.

- A short weekly review handles exceptions and escalations.

Closing thought

In 2026, AI governance is not about saying “no.” It’s about building a safe default—and a fast path to value.

Next: I’ll share a simple scorecard for evaluating AI tools for enterprise adoption (security, UX, integrations, cost, and ROI).